Summary

I had the very rare opportunity of releasing a brand new website recently. At our brand Explore Media we’ve launched a blog covering a few topics in Fort Worth, TX. We decided that we would develop out the website on a production server but block spiders from crawling the site’s content until the site was ready for soft launch.

The website is a fairly simple WordPress install using a theme from Theme Forest and a few extra bells and whistles. Even though the theme is largely out of the box, it still required quite a bit of configuration and customization. My team and I spent several weeks working on the site and the the content we wanted before we announced the launch to locals (huge shoutout to my intern Ahsan Punjani for all his work on this). On Monday December 4th we entered into our soft launch stage where we added the Meta Robots Noindex tag to much of the theme’s demo content, attachment pages, and stock categories then removed the block in Robots.txt to allow engines to crawl the site. The first thing we did was use Google Search Console to “fetch” the home page and submit it and all pages the home paged linked to into Google’s index. I used this rare opportunity to record a few observations on how Google treats a new website.

- – Google indexed the entire site (about 150 pages) in less than 30 minutes.

- – Google originally indexed all pages on the website, even those using the Meta Robots Noindex tag.

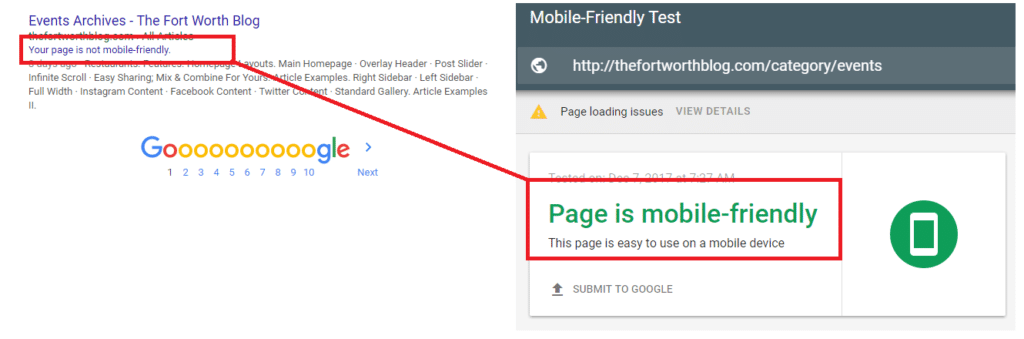

- – Google said that all pages on the website were not mobile-friendly, even though using the Mobile-Friendly Test tool said they were.

- – Google indexed the HTTP-WWW root domain variant even though it 301 redirects to the HTTP only variant.

Why is Google indexing pages with a noindex meta robots tag, listing every page as not being mobile friendly, and indexing and listing the HTTP-WWW root domain variant even though it’s a 301 redirect?

The consensus seems to be that when a new website / page is submitted to Google that the engine’s first priority is to crawl the content and have it available in the search engine’s index but that this first crawl point does only that and doesn’t take into consideration other things until other parts of the algorithm examine the crawl data or until subsequent later crawls. Dixon Jones of Majestic said their crawling works in a similar fashion, that simply getting the information crawled and stored in the index is the first priority and making sense of it then comes later.

What does this mean for your website? There’s a few big takeaways that I can see.

1. Remove Demo / Filler Content before a soft launch – If you’re using a website with filler or demo content it’s probably best to remove that content before launching your site and/or allowing engines to crawl the website as the content will get indexed anyways. Even in situations like ours where we knew no one would find the site unless we showed them, we now could have content indexed in Google we don’t really want people to see. Of course if you find yourself in this predicament you can always use Google Search Consoles “Remove URL” feature to temporarily hide the content from the index, when the temporary hide expires and the content is re-added to the index there’s a good chance Google will examine the meta robots tag for a directive.

2. Soft launch a week prior to your actual launch date – Google displaying the warning that a page is not mobile-friendly is frustrating, 3 days after the website was submitted via Google Search Console numerous pages still carry the warning in the index, though the number has dropped considerably over the past few days. In a world dominated by mobile search queries this may cause issues (if your website could be found in Google this early after launching), if this might be a concern for you then I would recommend a soft launch about a week prior to your actual launch. Also if you see a page that is mobile-friendly with the warning you can try using Google’s Mobile-Friendly Testing tool, if that tool says the page is mobile friendly then click the button that says “Submit to Google”. We found this to be correlated with the warning being removed quickly.

3. Always have an SEO consultant nearby before and after a new website or design launch – We often tell clients that when building a new website or designing a new website that having an SEO consultant review things pre-launch and post-launch is critical and these issues showcase that. If this website was for a big, well-established, brand the way Google crawls, indexes, and updates pages might cause some early issues.

What happened in Bing, Yahoo!, and DuckDuckGo after our website’s soft launch?

In short, nothing. Bing and Yahoo! (same engine) somehow had already indexed the website’s home page (no clue how they found it) but respected the Robots.txt block and displayed only the root domain. Even several days after the Robots.txt block was removed the Bing engine has not updated the listing. In DuckDuckGo the website is not even listed. In all fairness, we did not utilize any sort of submission tools for these engines like we did Google and their behavior is entirely as expected.